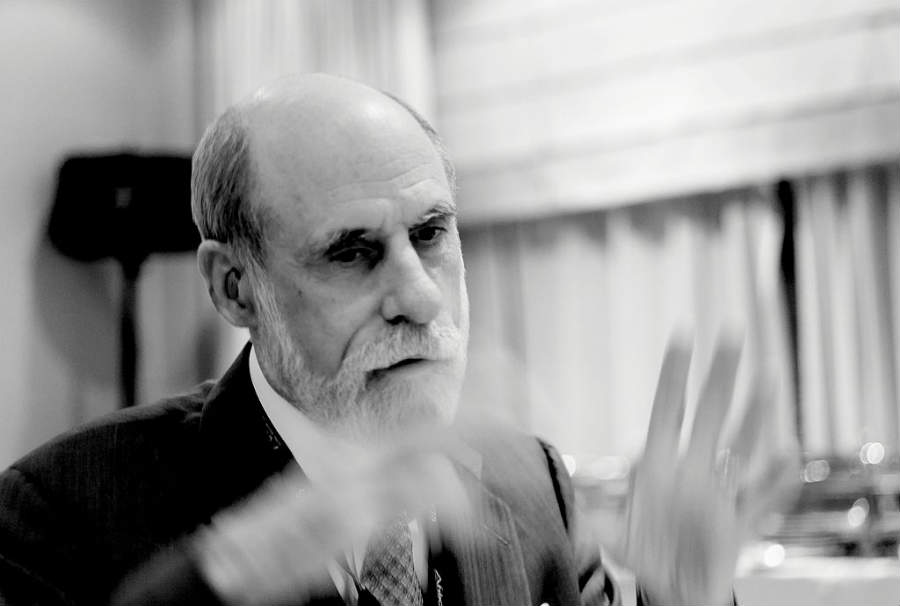

Visualfy interviews exclusively Vint Cerf, Vicepresident and Google’s Chief Evangelist, also known as one of the fathers of Internet.

María José Millán (CSO at Visualfy) : Today, with great honor we welcome to Visualfy Vint Cerf, Vice President and Chief Internet Evangelist for Google. Vint is one of the ‘fathers of Internet’, as you all probably know, and is also founder of the International movement of change makers ‘People Centered Internet’, created to ensure that Internet continues to improve peoples’ lives and being a positive force for good.

Good morning Vint. It’s a pleasure for Visualfy to have you here. Thanks so much for such a great opportunity to share time with you and for helping us remind young people with hearing loss that you can be whatever you want, even the Vice President of Google.

I am María José Millán. I am in charge of Marketing at Visualfy, and with me is Manel Alcaide, founder of Visualfy. As you know we are a startup formed by deaf and hearing people, and we create technology for people with hearing loss and companies that are committed to accessibility.

Manel: Hi Vint, I’m really happy to have you with us. We are looking forward to know you better, so let’s get down to business! As we have said you are one of the fathers of Internet. Seeing what Internet means for millions of people nowadays, did you imagine the massive success back then?

Vint Cerf: Well, I think back in the 1970’s when Robert Khan and I did the original design we were focused on a problem that the American Defense Department was trying to solve, which was how to use computers in commanded controls. So we were focused on an engineering problem. But It was very clear, even in the predecessor system called ARPANET, which was a test to see if we could get different brands of computers talking to each other on a homegeneus network. It was very clear that the engineers who were using it saw this in addition to its technical potential, you could see the social effects of this thing. We created electronic mail, we had mailing lists, we had people discussing you know, who were the best science fictions writers or where were the best restaurants.

So, it was very clear when the internet was being designed and built, that had, that it was a social component to it, and it also had a rather direct impact or implication for people with hearing impairment, because a lot of the communication on the net is text, it’s written. By email and chat and things like that. So, from my point of view, as a person with a hearing impairment, having a network dedicated to an alternative way of communicating was really very welcome, so I could see some of that.

It was very clear when the internet was being designed and built, that had, that it was a social component to it, and it also had a rather direct impact or implication for people with hearing impairment, Vint Cerf. Share on XBut the idea that this would become a global system at the scale it is was not assured. But I will say that when we were doing the design we anticipated that it would have to be global in scope in order to serve the military application because the defense department had to be able to operate anywere in the world. So in some sense we tried to account for that in the design. That’s not the same as having nearly four million people on line there. That’s an evolution which is the consequence of its utility. In a variety of social and private sectors and in public sector and also an enormous private sector investment. None of this would be at the scale it currently is without that. So the private security motivation came much later, in the late 1980’s and early 1990’s. Particularly, Tim Berneers Lee and the world wide web emerged out of this conundrum of innovation.

MJ: Right now you are Vice President and Chief Internet Evangelist for Google, we are curious! How is a day in the life of a Chief Internet Evangelist? What do you do?

V.C.: Well, an example of one of the things I do is Interviews like this over the net. Actually quite often and I’m frequently doing little videos and things like that which I send to conferences that I’m not able to attend in person. So part of my job is to be present in a variety of different venues, sometimes in person and sometimes remotedly. In fact, I’ll be in Barcelona in a couple of days to celebrate an award from the Catalonian goverment. So I travel a great deal, about 80% of my time, I often go to places where there is no Internet in order to encourage people to build the infrastructure needed.

I spend a significant part of my time on policy associated with internet, whether is the social impacts of the internet, how we cope with some of the negative behaviours that we see or the positive benefits of the internet, we see input, electronic commerce, we see new jobs being created, new businesses being created on top of the internet infraestructure. So I have a policy role to play and since I am in Google, which is a high tech company, I spend a lot of my time on technical matters, trying to understand how to improve the way the system works, what new things can we do on top of it, and particularly at Google, where we keep expanding the range of products and services that we offer, we are in the hardware business, we make phones, we make Google Home, appliances, devices, other Internet of things devices. So, I can’t imagine a more fun job tan the one I have because I get to pay attention to so many different things that are associated with the internet.

Manel: Thousands of people with hearing loss around the world dream about developing careers in technology, but they still find barriers due to lack of accessibility. What would you recommend to them?

V.C.: Accessibility is a very important term and we should take it as broadly as we can. We have possibly the most flexible device ever invented, it’s called the computer. It would do anything that we could program it to do. So there really aren’t any limits except our imagination and our ability to write software. And yet, in spite of that, we all confirm that the applications that are available to us on our laptops, desk tops, or mobiles or pads are not very satisfactory when it comes to accessibility. And I’m not happy about that.

It turns out it is not so easy to design and figure out how to design an application that would be accessible or that can be made accesible to someone with some range of visión impairment or some range of hearing impairement, some range of movement impairment or some range of cognitive impairment. As a designer of an application you have to think about the design at the very beginning on the pressumption it must be accessible to everyone. So, how do I design this application so that it can adapt to the kinds of acommodations that are needed for accessibility. Not everyone who is designing and programming has an intuition about how to do that design. So we really are suffering right there from a lack of depth of understanding of what practices and techniques make things accessible.

There is a tendency to design or build a product or a service and then try to overlay accesibility on top of this. An example of this, would be screenreaders which understand html of the world wide web and try to voice what is on the screen. I say that is very unsatifactory in many aspects because the designers of webpages that have been voiced didn’t think about how to present this two dimensional information in a serial way. If its voice, you can do whatever I want.

We all confirm that the applications that are available to us on our laptops, desk tops, or mobiles or pads are not very satisfactory when it comes to accessibility. And I’m not happy about that, Vint Cerf. Share on XSo, I’m a huge fan of deeper research into gaining insights into what makes things accesible, but at the same time I’m rather encouraged by the flexibility of programmable devices to adapt to people’s needs. So we need to work harder to build accessibility frameworks that would cooperate with the user to do this kind of adaptation.

So we still have a long way to go and I am a noisy voice at Google on this topic and I will continue to be a noisy voice on this topic. So I’m satisfied that we had figured out how to do a good job of making our products accessible.

MJ: Don’t you think that we need deaf and hard of hearing people people in technology teams to, you know, gain that understanding?

V.C.: So I have two examples for you that I want to encourage the people you work with to think about. There are two deaf people at Google that I work with. One of them, his name is Ken Harrenstien.Ken is deaf and signs. He is the guy that worked really hard to get captions on youtube videos, automatic captioning. Built into this is, by the way, a way of downloading the captions that are being generated by the computer and then you can add it to fix the mistakes and load them again so you can have high quality captions. So a deaf guy led that effort and I think that makes a lof of good sense.

The second guy is Dimitri Kanevsky. Dimitri is a born deaf, russian speaker, now he is one of our employees and he speaks english as well, but he has a very heavy accent. He has developed automatic captioning or automatic transcription in several applications. But the first one is an application that runs in your mobile and it takes speaching that it hears and then it puts text up and it’s shown on your mobile scrolling down. He did it because he needed it at meetings and conferences that he couldn’t hear but where he was having conversations with people. This works in, I don’t know, 40 o 50 different languages. So you can pick a primary and secondary language and a switch back and forth between them.

So that’s one beautiful application but even more exciting application is that we have a video conferencing capability that we call Google Meets and in the Google meets system we now have the ability to automatically caption whatever anyone is saying in the conference. So you might have a group of people, who are, different people communicating and whatever anyone says is captioned at the bottom of the screen automatically in the Google Meets system. That’s the very new techabillity growing on success using machine learning for the abillity of speech understanding or speech recognition. So, the technology of machine learning has advanced dramatically over the last decade to the point now where we can do a very good job understanding speech and of course generating speech. So we have devices, like Google Home, that can speak. Now of course we need to have all the same devices captioning the responses that come back, so we have in addition to a smart speaker we also have a displayed base at Google Home device that can speak back and in text.

MJ.: We are so glad to hear that. Vint, we all have read about your love story, the story of your wife. She was born deaf and was able to hear after a cochlear implant at the age of 53. How has technology impacted your life and how do you think it is improving people with disabilities’ life? Do you think it is a force for good?

V.C.: Yes, I am a huge fan of technology. I wouldn’t be where I am if I weren’t. Just to clarify one thing, my wife Sigrid was actually born with normal hearing but she lost it when she was three from spinal meningitis. The reason that’s important is she was what it’s called post lingual deaf, she could hear, she could speak, she had auditory memory. That turnes out to be rather important because when she finally had a cochlear implant 50 years later the brain had not forgotten its auditory learning, and so she very very quickly adapted to the implants’ function. Within like 20 minutes or so of turning these on, of activating them for the first time, she picked up the phone and we talked to each other on the phone for the first time in our 30 years of marriage.

Subsequently , she had a second implant 10 years later and then more recently one of the implants failed and we had another re- implant which has also been successful.

The other thing which is happening is that the technology of speech processors that go with cochlear implants have also improved over time and so we watch her right ear implant performing better than ever because we got new speech processors that go with it.

So you can imagine I am a huge fan of technology that, well, augment our human capabilities, either to bring them up to nearly normal or even to surpass ordinary normal human capabilities. So we don’t need to stop at the normal human capacity. Think for just a minute about being able to hear what dogs hear 20.000 cycles a second, maybe we wouldn’t want to but you could do that… or to see with X-Rays, to see with infra-red, to see with ultra violet, to improve our vision not only to correct blindness but maybe even to see like a microscope dab.

So I have this excitement about electronic and neural interfaces that allow us to bring new capabilities to human intellect . So just, I’d like to go on just a little bit on this topic, because a very important pair of researchers in history JCR Licklider and Douglas Engelbart convinced in the 1950’s that computer could be used to augment human intellect and whenever you do at Google search today, whenever you use Google Trends, whenever you’re watching a video and seeing an automatic caption you are using artificial intelligence, you’re using computer capability to augment your own capacity.

And so at Google we see all of these things as tools to improve our own ability to function, to do things that we could’t do on our own. So I am very excited about that potential, but I have to say that I am also very concerned about becoming dependant on those technologies or having the technologies turned against us.

I am a huge fan of technology that, well, augment our human capabilities, either to bring them up to nearly normal or even to surpass ordinary normal human capabilities, Vint Cerf. Share on XAnd so in the internet world, for example, especially in social networking you can see how one person can use computers and bot nets for example to leverage their particular point of view to make it appear that ten of thousands of people think the way they do. This is a kind of augmented disinformation and misinformation which is harmful, to say nothing of denial of service attacks and malware attacks and other kind of things.

So we end up realizing that technology is a two ways word and it can be abused. In addition to foreting ways to improving our lifes using the technologies we also have to cope with people who try to use that technology for harmful purposes. So that is the big challenge, it’s a social and economic challenge that we must confront, not solvable purely with technology.

MJ: We have already talked about it but, knowing the internet and technogy’s potential to help people, do you think that companies should focus more on making their technologies accesible for everyone?

V.C.: Yes, you’re preaching to the converted. I believe that companies that offer products and services should be thinking about the full range of needs, the population on the planet and that includes people with disability.

Let me try to modulate this a little bit. You both know dissabilities come in a variety of different flavors so to speak. Deafness varies from reparable with a hearing aid or an amplifier to needing a cochlear implant. We can make the same argument for varies forms of visual impairment. It is likely that not every product can meet, can adapt to needs of every form of dissability, especially in very extreme cases.

So the question is what you do about that, and one answer is to design and build products that have standardized interfaces so that if we want to or need to we can build a device which is specific to a particular use with dissability and then may use some standard interface in order to manage and control the application. So standardization would be our friend here where we use the device which is specialized to our needs and then interfaces too, an application.

So I can see responsabilities ranging from the product maker actually doing everything possible to deal with the accessibility acommodation or standardizing interfaces to let another person, another company provide even more refined capability of adapting to the particular users’ needs. But we should incorporate all that thinking in our product development.

MJ.: Among the projects that Google has underway, is there any focused on accessible technologies for people with hearing loss? You’ve mention the captioning… Could you tell us about this kind of projects?Are there any project that you could tell us about?

V.C.: Well, within the company we’ve stablished what we call Google accessibility ratings, you know, from 1 to 4. And we try to build our products so that they are maximally accessible. We still have a ways to go to even understand how to do that prior to actually doing it but there’s great deal of attention being paid inside the company.

We have a central group that is focused on accessibility. They have correspondant so to speak with the various product areas that Google is working on in order to help them improve their implementation of accessibility mechanisms.

I believe that companies that offer products and services should be thinking about the full range of needs, the population on the planet and that includes people with disability, Vint Cerf. Share on XSo for people with hearing impairments I think we have pushed pretty far along in automatic speech recognition by technology but we want to address an even broader range of dissabilities than just hearing impairment. So we are continuing to work on making our products more accessible specifically for people with hearing impairment.

At some point, for example, we should be able to support a real-time translation of languages in a visible way. So if one person is speaking Russian and someone else is speaking English they both can see the text of what it means and in the language of choice. A good example would be two deaf people speaking two different languages both communicating because their mobiles are doing the translation and presenting display of the speech. That’s get me all excited for being able to help people over that barrier.

M.: Technology is moving so fast and changing the way we do everything. Everything is possible today. For you, which has been the biggest technology advance in the last ten years and how do you think technology will improve accessibility in the coming years?

V.C.: So I think that probably the most important technology to become visible is machine learning. The ability to build multi learn neural networks that can be adapted to applications including speech understanding, speech recognition, speech translation.

All of those kinds of image recognition, for example, navigation helping the blind person know what’s going on, what is in my surrounding, situation awareness, things like that. Self driving cars make heavy use of machine learning in addition to AI. So it’s a particularly notable computer development over the last decade or so.

Looking forward into the future, one of things which we are becoming increasingly capable of doing is interfacing computing devices, electronic devices to our neuronal system. So, a cochlear implant Is a good example of the sensory neural interface with electronic. We’re seeing similar kinds of optical devices that store vision and we can see sensory motor possibilities for people who have lost the control of their arms and legs because of a spinal injury, there are maybe ways of propagating the neural signals through a device so it’s regain control over limbs . We’re seeing extraordinary work for people with losed limbs using neural interfaces as opposed to mechanical ones in order to control artificial limbs.

So I’m very excited about the sensory neural, sensory motor capabilities coming in the near future. Looking much farther into the future and much more speculative is the possibility of cognitive interfaces between computer devices and our brains.

Looking much farther into the future and much more speculative is the possibility of cognitive interfaces between computer devices and our brains, Vint Cerf. Share on XAs I get older I notice that I forget things more and so what I need is an augmented memory and I’m hoping someday I’ll have a little memory implant. I think that this kind of cognitive interface it’s extremely specular it’s very much science fiction right now ‘cause we don’t really understand how the brain stores memory and how we can make it notable and noticiable, how could we take computer with memory and draw your conscious attention to that memory. Other than the way we do it now which is putting it in a computer or do Google search which is my way of recovering memory lost.

So that’s the very speculative in the farther future but it’s not out of the range of possibility given how much we have learned over the past 50 or 100 years about how human bodies work.

MJ: Talking about image recognition, we at Visualfy, we work with people with all kinds of hearing loss and, you know, some of them use sign language to communicate. We are very worried because all voice commanded devices can leave behind people that cannot use voice. Do you have any project regarding sign language recognition?

V.C.: So we actually have two things going on. We are, along with other people have been, exploring wether is possible to have sign recognition, image recognition. It requires a very high quality video stream that isn’t broken up or anything, because we have to see motions that are really quick. I’m not a signer myself and I watch people signing and I’m amazed how quickly they are able to communicate using signing so we’re still some ways away, I think from a reliable way to do this.

Typically you end up wearing a glove or some sort of preconfigurable arwear. Microsoft for example has one, I can’t get what they call it but…

There are devices that can figure out where’s body position. But I think that they aren’t running fast enough to do a very good job for signing. There is a another interesting thing is that people who are born deaf or with difficult speech and the speech is hard to understand we’ve even gone with the trouble training our machine learning model to understand this particular kind of deaf speech and translate into more normal speech. So it might help someone whose ability to articulate is somehow abated. To provide better quality of speech by using a machine learning and voice generation. So that’s another attemp to bring someone whose situation is not satisfactory so it’s easy to interact with the general public.

Vint Cerf says goodbye to Visualfy…

Well I hope that you are successfull in this effort, of course i’m a big fan of this kinds of research programs and the hope that we can produce usable upcomes that improves the lives of people with hearing impairedment.

Thanks so much for leting me joining you today I really appreciate it and of course I will be happy to reinforce your message which is technology can help and we just need to put our minds to it.